Welcome to 1757

this set of documents is intended to be both a teaching platform as well as a resource for future generations to learn about how we do programming on this team.

If you want to know "how do I run robot code" just start with installation

Warning: When writing and testing out your code on this site, you may encounter issues with python depending on your browser. If any problems pop up just try reloading the site.

More to come!

General Resources

| Resource Name | Description |

|---|---|

| How we do game breakdowns | Mandatory watch for every 1757 student this is typically substituted with a more specific walkthrough throughout the season teaching game breakdown |

| FRC.sh | FRC docs quick reference |

| FRCZero | FRC Zero is a knowledge base for students, mentors, and parents of FIRST Robotics Competition teams. It is a single source of information for all things FIRST Robotics Competition. This includes information on the game, the rules, the robot, and the competition and much more. |

| TheBlueAlliance | The Blue Alliance is the best way to scout, watch, and relive the FIRST Robotics Competition. |

| Statbotics | Modernizing FRC Data Analytics, FRC match data analysis |

| Mechanism Encyclopedia | past subsystems/structures/gamepieces and solutions for when they were implemented, best for getting ideas on execution |

| ChiefDelphi | Home of OpenAlliance, FRC Discussion, and everything inbetween. Typically has a low signal to noise ratio, but contains a lot of good collective knowledge. |

| FIRST's own technical resources | might have some bits, mostly about specifics of "how do I..." |

| Competition, Manual, and QA system | will be updated as season progresses, where you can find the game manual |

| FRCManual.com | A better, fuzzy searchable instance of the manual |

| FIRSTUpdatesNow | RC youtube channel, does behind the bumpers, behind the glass, information videos, good for resources |

| JumpStart | Good "getting started" series |

| 1678's helpful video series | good set of videos by a good team, regarding a lot of different FIRST aspects |

Design-Specific resources

| Name | Description |

|---|---|

| FRCDesign.org | Teaching and learning platform for CAD that 1757 uses |

| How 1757 does multi-doc onshape projects | How we work with multiple documents to allow multiple collaborators on the same project at once |

| 971's spartan series | bunch of talks by a team who knows what they're talking about |

| 1678's fall workshop series | bunch of talks by a team who knows what they're talking about |

| Workshop: how to design simple, pt1 | By Karthik |

| Workshop: how to design simple, pt2 | by Adam Heard 254/973/a bunch |

| Workshop: how to design simple, pt3 | by Mike Corsetto of 1678 |

| Workshop: how to set good goals | Mike Corsetto 1678 |

| Workshop: how to analyze a game | Mike Corsetto of 1678 |

| "Modern" FRC Games, Strategy, and Design | by 2910 jack in the bot from milcreek, wa 2025 world champions |

| ReCalc | A set of really beneficial calculators, can figure out what gears you need to achieve a certain gear ratio, general timings of mechanisms based on their ratios, has motor profiles to be able to compare intrinsic values of |

Programming-specific resources

| Name | Description |

|---|---|

| Robotpy ReadTheDocs | Official documentation for everything related to RobotPy |

| RealPython's from-scratch python course | Extra python tutorials, in case this website is insufficient for you |

| 2021 T Shirt Cannon | Pneumatically controlled T Shirt cannon mounted onto a swerve drive chassis |

| 2022 Robot Code | Contains limelight vision system, pneumatic intake with rollers, turret, angle alignment system, swerve drive, and a climber |

| 2023 Robot Code | State-dependent lights, 3 jointed arm with inverse kinematics, simple state-driven intake, swerve drive |

| 2024 Robot Code | Complex intake state system, full field vision system, multi motor shooter with angle alignment, contains first use of profiled motion control on motors, elevator and climber mechanisms |

| 2025 Robot Code | Swerve, elevator, intake, full field vision system, auto alignment to piece, climber |

| Python day 1 video | By Luke, introductory python lesson |

| The Missing Semester of your CS Education | Linux tutorial series |

| Learning Python 4th edition | Complete supplemental python tutorial book |

| Programming session 1 | 2022 introductory programming lessons |

| PathPlanner | The system for automated paths we use |

| Limelight Vision | Limelight vision system's main page |

| PhotonVision | PhotonVision vision system for _pi systems |

| FRC Control system diagram | Explains each electrical component in a typical FRC robot |

| Basic WPIlib software stack | Not super relevant, we use a little bit of it though |

| FRC networking basics | How network systems work on a FRC robot |

| CTRE docs | Cross the Road Electronics documentation page |

| Introductory control theory course | Explains PIDF |

| Carbon NOW | Good looking code snippet generator |

| Cornell Python tutorial series | Fall 2020 python tutorials at Cornell university |

Discord Servers

| Server | Why? |

|---|---|

| Unofficial FRC Discord Server | lots of yap, stuff gets lost easily, but people discussing FRC |

| NEFRC Discord | New England specific FRC server, has good content and coordination between other teams |

Installing RobotPY

As a team we use python and robotpy

Linux for FRC Development

Linux plays a crucial role in FIRST Robotics Competition (FRC) development, serving as both the foundation for robot control systems and the preferred development environment for many teams. This section covers Linux fundamentals specifically tailored for FRC robotics programming.

Why Linux Matters in FRC

Linux is the underlying operating system behind RoboRIOs, the main control unit for FRC robots. Understanding Linux concepts helps you:

- Debug robot issues: SSH into the RoboRIO to diagnose problems

- Develop efficiently: Use powerful command-line tools for faster development

- Understand system behavior: Know how your robot code interacts with the OS

- Deploy code reliably: Understand the deployment process and troubleshoot issues

- Manage development environments: Set up consistent, reproducible development setups

Linux in the FRC Ecosystem

graph TD

A[Development Machine] --> B[Robot Code]

A --> C[Git Repository]

A --> D[Build Tools]

B --> E[RoboRIO]

E --> F[Linux OS]

F --> G[Python Runtime]

F --> H[System Services]

F --> I[Network Stack]

C --> J[Version Control]

C --> K[Collaboration]

D --> L[RobotPy]

D --> M[WPILib Tools]

subgraph "RoboRIO Environment"

E

F

G

H

I

end

subgraph "Development Tools"

N[SSH]

O[Git]

P[Python]

Q[Text Editors]

R[Terminal]

end

A --> N

A --> O

A --> P

A --> Q

A --> R

style E fill:#1976d2

style F fill:#4caf50

style A fill:#ff9800

Key Linux Concepts for FRC

File System Structure

Understanding the Linux file system helps when working with RoboRIO files:

/home/lvuser/- User home directory on RoboRIO/usr/local/frc/- FRC-specific files and libraries/etc/- System configuration files/var/log/- System and application logs

Processes and Services

- systemd: Service management system used on RoboRIO

- Process monitoring: Understanding how robot code runs as a service

- Resource management: CPU, memory, and I/O considerations

Networking

- Network interfaces: Ethernet, WiFi, USB connections

- Port forwarding: Accessing robot services from development machine

- SSH tunneling: Secure remote access to robot systems

Development Environment Setup

A proper Linux development environment for FRC includes:

- Terminal and Shell: Command-line interface for efficiency

- Git and Version Control: Code management and collaboration

- Python Environment: Virtual environments and package management

- SSH Tools: Remote access to robot systems

- Text Editors/IDEs: Code editing with FRC-specific features

What's Covered in This Section

- RoboRIO System Internals: Deep dive into RoboRIO's Linux environment

- Development Tools: Git, lazygit, bash scripting, and SSH workflows

- Python Development: Setting up Python environments for FRC development

- System Administration: Managing Linux systems for robotics

Quick Start Commands

Here are essential commands every FRC developer should know:

# Connect to RoboRIO via SSH

ssh [email protected]

# Check robot code status

sudo systemctl status robot

# View robot code logs

journalctl -u robot -f

# Deploy robot code (from development machine)

python robot.py deploy

# Check network connectivity

ping roborio-TEAM-frc.local

Learning Path

- Start with basics: Command line navigation and file operations

- Learn Git: Version control for team collaboration

- Master SSH: Remote access and troubleshooting

- Understand processes: How robot code runs on the RoboRIO

- Practice debugging: Using logs and system tools to solve problems

Whether you're new to Linux or looking to deepen your understanding for FRC development, this section provides practical knowledge you'll use throughout your robotics journey.

RoboRIO System Internals

The RoboRIO runs a specialized Linux distribution optimized for real-time robotics control. Understanding its internals helps you debug issues, optimize performance, and make the most of your robot's capabilities.

System Overview

The RoboRIO uses a NI Linux Real-Time operating system, which is based on:

- Kernel: Linux with real-time patches

- Architecture: ARM Cortex-A9 (ARMv7)

- Init System: systemd

- Package Manager: opkg (OpenWrt-based)

graph TD

A[RoboRIO Hardware] --> B[NI Linux Real-Time]

B --> C[systemd Init System]

B --> D[FRC Runtime]

B --> E[Network Services]

C --> F[Robot Code Service]

C --> G[FRC Services]

C --> H[System Services]

D --> I[Python Interpreter]

D --> J[WPILib HAL]

D --> K[FPGA Interface]

E --> L[SSH Server]

E --> M[FTP Server]

E --> N[Web Interface]

style B fill:#4caf50

style D fill:#2196f3

style F fill:#ff9800

File System Structure

Understanding the RoboRIO's file system is crucial for debugging and development:

Key Directories

/home/lvuser/ # User home directory (where your code runs)

├── py/ # Python robot code deployment location

├── .cache/ # Application cache files

└── .config/ # User configuration files

/usr/local/frc/ # FRC-specific installations

├── JRE/ # Java Runtime Environment

├── bin/ # FRC utilities and tools

├── lib/ # FRC libraries

└── third-party/ # Third-party libraries

/var/local/natinst/ # National Instruments specific files

├── log/ # System logs

└── config/ # NI configuration

/etc/ # System configuration files

├── natinst/ # NI-specific configuration

├── systemd/ # Service definitions

└── network/ # Network configuration

/opt/ # Optional software packages

└── ...

/tmp/ # Temporary files (cleared on reboot)

Important Files

/home/lvuser/robot.py- Main robot code entry point/etc/natinst/share/scs_imagemetadata.ini- System image information/var/log/messages- System log messages/etc/hostname- System hostname configuration

System Services

The RoboRIO uses systemd to manage services. Here are the key services for FRC:

Robot Code Service

Your robot code runs as a systemd service called robot:

# Check robot code status

sudo systemctl status robot

# Start robot code

sudo systemctl start robot

# Stop robot code

sudo systemctl stop robot

# Restart robot code

sudo systemctl restart robot

# Enable robot code to start on boot

sudo systemctl enable robot

# View robot code service definition

cat /lib/systemd/system/robot.service

FRC-Related Services

# FRC Driver Station communication

sudo systemctl status frcds

# Network communication services

sudo systemctl status netconsole-host

sudo systemctl status systemWebServer

# Time synchronization

sudo systemctl status ntp

SSH Access and Remote Management

SSH is your primary tool for accessing and managing the RoboRIO remotely.

Connecting to the RoboRIO

# Standard connection (replace TEAM with your team number)

ssh [email protected]

# Alternative IP addresses

ssh [email protected] # Ethernet connection

ssh [email protected] # USB connection

# With verbose output for debugging

ssh -v [email protected]

Default Credentials

- Username:

admin - Password: (empty - just press Enter)

SSH Key Setup

For secure, passwordless access, set up SSH keys:

# Generate SSH key pair (on development machine)

ssh-keygen -t rsa -b 4096 -C "[email protected]"

# Copy public key to RoboRIO

ssh-copy-id [email protected]

# Or manually copy the key

cat ~/.ssh/id_rsa.pub | ssh [email protected] "mkdir -p ~/.ssh && cat >> ~/.ssh/authorized_keys"

Useful SSH Commands

# File transfer with SCP

scp robot.py [email protected]:/home/lvuser/

scp [email protected]:/var/log/messages ./roborio-logs.txt

# Port forwarding for web interfaces

ssh -L 8080:localhost:80 [email protected]

# Run single commands remotely

ssh [email protected] "systemctl status robot"

# Interactive session with X11 forwarding

ssh -X [email protected]

Process Management

Understanding processes helps with debugging and performance monitoring:

Viewing Processes

# List all processes

ps aux

# Show process tree

pstree

# Real-time process monitoring

top

htop # If available

# Find specific processes

ps aux | grep python

pgrep -f robot.py

Process Control

# Kill a specific process

kill <PID>

killall python3

# Force kill unresponsive process

kill -9 <PID>

# Send signals to processes

kill -USR1 <PID> # Send user-defined signal

System Monitoring and Diagnostics

System Information

# System information

uname -a # Kernel and system info

cat /proc/version # Detailed kernel version

cat /proc/cpuinfo # CPU information

cat /proc/meminfo # Memory information

# Disk usage

df -h # File system usage

du -h /home/lvuser/ # Directory size

# Network interfaces

ip addr show # Network interface information

ip route show # Routing table

Log Analysis

Logs are crucial for debugging robot issues:

# View robot code logs

journalctl -u robot # All robot service logs

journalctl -u robot -f # Follow robot logs in real-time

journalctl -u robot --since "1 hour ago"

# System logs

tail -f /var/log/messages # Real-time system messages

dmesg # Kernel ring buffer

dmesg | tail # Recent kernel messages

# Search logs for specific errors

grep -i error /var/log/messages

journalctl -u robot | grep -i exception

Performance Monitoring

# CPU and memory usage

top -p $(pgrep -f python) # Monitor robot code specifically

# I/O statistics

iostat # If available

# Network statistics

netstat -i # Network interface statistics

ss -tuln # Socket statistics

Robot Code Deployment Process

Understanding how code gets deployed helps with troubleshooting:

Deployment Steps

- Build: Code is prepared on development machine

- Transfer: Files are copied to RoboRIO via SSH/SFTP

- Permissions: Execute permissions are set

- Service Restart: Robot service is restarted

# Manual deployment simulation

scp -r src/* [email protected]:/home/lvuser/py/

ssh [email protected] "sudo systemctl restart robot"

Deployment Locations

- Robot code:

/home/lvuser/py/ - Backup location:

/home/lvuser/py.bak/ - Service file:

/lib/systemd/system/robot.service

Troubleshooting Common Issues

Robot Code Won't Start

# Check service status

sudo systemctl status robot

# View detailed logs

journalctl -u robot -n 50

# Check file permissions

ls -la /home/lvuser/py/robot.py

# Ensure file is executable

chmod +x /home/lvuser/py/robot.py

Network Connectivity Issues

# Test network connectivity

ping 8.8.8.8 # Internet connectivity

ping 10.TE.AM.1 # Router connectivity

# Check network configuration

ip addr show

ip route show

# Restart network services

sudo systemctl restart networking

Performance Issues

# Check system load

uptime

cat /proc/loadavg

# Monitor resource usage

top

iostat 1 5

# Check for memory leaks

cat /proc/meminfo | grep -i available

Disk Space Issues

# Check disk usage

df -h

# Find large files

du -h /home/lvuser/ | sort -h

# Clean temporary files

sudo rm -rf /tmp/*

sudo journalctl --vacuum-time=7d # Keep only 7 days of logs

Advanced System Configuration

Custom Services

You can create custom systemd services for additional functionality:

# Example: /lib/systemd/system/custom-logger.service

[Unit]

Description=Custom Robot Logger

After=robot.service

[Service]

Type=simple

User=lvuser

ExecStart=/home/lvuser/custom_logger.py

Restart=always

RestartSec=5

[Install]

WantedBy=multi-user.target

Network Configuration

The RoboRIO's network setup can be customized:

# View network configuration

cat /etc/systemd/network/

# Modify network settings (be careful!)

sudo vi /etc/systemd/network/10-eth0.network

Best Practices

- Regular Backups: Keep backups of working configurations

- Log Monitoring: Regularly check logs for warnings

- Resource Monitoring: Monitor CPU and memory usage during competition

- Network Health: Ensure stable network connections

- Service Management: Use systemd properly for service control

Security Considerations

- Change default passwords: Set strong passwords for admin account

- SSH Key Authentication: Use SSH keys instead of passwords

- Network Segmentation: Isolate robot network from other systems

- Regular Updates: Keep system packages updated when possible

- Access Control: Limit who has SSH access to the robot

Understanding these RoboRIO internals will make you a more effective FRC developer, capable of diagnosing and solving complex robot issues quickly and efficiently.

Development Tools on Linux for FRC

This page covers core development tools used in a Linux environment for FRC: Git, lazygit, Bash, and SSH. It focuses on practical workflows you’ll use daily when building and deploying robot code.

Git: Version Control for Teams

Git helps you collaborate, review changes, and maintain a clean history.

Essential Setup

# Set your identity (one-time)

git config --global user.name "Your Name"

git config --global user.email "[email protected]"

# Recommended: colored, helpful output

git config --global color.ui auto

git config --global init.defaultBranch main

# Optional: better logging alias

git config --global alias.lg "log --oneline --graph --decorate --all"

Daily Workflow

# Clone the repository

git clone https://github.com/1757WestwoodRobotics/prog-docs

# Create a new feature branch

git checkout -b feature/robotpy-logging

# Stage and commit changes

git add src/tools/linux/*.md

git commit -m "docs(linux): add RoboRIO internals page"

# Rebase with latest main

git fetch origin

git rebase origin/main

# Push your branch

git push -u origin feature/robotpy-logging

Reviewing Changes

# Inspect staged changes

git diff --staged

# See recent history

git lg

lazygit: TUI for Git

lazygit is a terminal UI that accelerates common Git tasks.

Installation (examples)

# Arch Linux

sudo pacman -S lazygit

# Debian/Ubuntu

sudo apt install lazygit

Usage

lazygit

- Stage/unstage files, commit, rebase, resolve conflicts interactively

- Quickly view diffs and logs without memorizing commands

Bash: Your Command-Line Companion

Bash lets you automate tasks and work efficiently.

Basics You’ll Use Often

# Navigate

pwd; ls -la; cd src/tools/linux

# Search in repository

grep -R "roborio" -n src

# Create directories & files

mkdir -p src/tools/linux

printf "# New Page\n" > src/tools/linux/new-page.md

# Permissions

chmod +x scripts/deploy.sh

Helpful Shortcuts

# Use aliases in ~/.bashrc or ~/.zshrc

alias gs='git status'

alias gl='git lg'

alias ll='ls -alF'

SSH: Secure Remote Access

SSH connects you to the RoboRIO and other Linux machines.

Common RoboRIO Hosts

- mDNS:

roborio-TEAM-frc.local - USB:

172.22.11.2 - Ethernet:

10.TE.AM.2

Useful Commands

# Open a shell on the RoboRIO

ssh [email protected]

# Copy files

scp ./robot.py [email protected]:/home/lvuser/

# Forward a local port to remote

ssh -L 8080:localhost:80 [email protected]

# Run a remote command

ssh [email protected] "systemctl status robot"

SSH Keys (recommended)

ssh-keygen -t ed25519 -C "[email protected]"

ssh-copy-id [email protected]

Tips for Team Collaboration

- Commit small, logical changes with clear messages

- Use branches for features and fixes

- Pull or rebase frequently to avoid large conflicts

- Use code reviews (PRs) to maintain quality

These tools form the backbone of an efficient Linux-based FRC workflow.

Python Development Environment on Linux for FRC

Setting up an efficient Python development environment on Linux is crucial for FRC robotics programming. This guide covers the essential tools, configurations, and workflows specific to RobotPy development.

Python Environment Management

Managing Python environments properly ensures consistent, reproducible builds across team members and prevents dependency conflicts.

System Python vs Virtual Environments

graph TD

A[System Python] --> B[Global Packages]

A --> C[System Tools]

D[Virtual Environment] --> E[Project Packages]

D --> F[Isolated Dependencies]

D --> G[Team Consistency]

H[FRC Project] --> D

H --> I[RobotPy]

H --> J[Third-party Libraries]

style D fill:#4caf50

style H fill:#2196f3

style A fill:#ff9800

Best Practice: Always use virtual environments for FRC projects. pipenv is the recommended tool for managing virtual environments and dependencies, as it simplifies the workflow and ensures consistency.

Using Pipenv

pipenv is a tool that automatically creates and manages a virtualenv for your projects, as well as adds/removes packages from your Pipfile as you install/uninstall packages.

First, install pipenv:

# Using pip

pip install --user pipenv

# Or using your system's package manager (Debian/Ubuntu)

sudo apt install pipenv

To create a new project environment:

# Navigate to your robot code directory

cd ~/frc-robot-2024

# Create a Pipfile and install dependencies (if any)

# You can specify a Python version

pipenv install --python 3.11

# Activate the virtual environment

pipenv shell

# Verify activation (should show virtual env path)

which python

To install packages:

# Install a package and add it to Pipfile

pipenv install robotpy[all]

# Install a development-only dependency

pipenv install --dev pytest

Managing Dependencies with Pipfile

pipenv uses Pipfile and Pipfile.lock to manage dependencies. The Pipfile is where you declare your project's dependencies, while Pipfile.lock ensures deterministic builds by locking down the exact versions of the dependencies.

Here is an example Pipfile for an FRC project:

[packages]

robotpy = {extras = ["all"], version = "*"}

robotpy-pathplannerlib = "*"

[dev-packages]

pytest = "*"

black = "*"

flake8 = "*"

mypy = "*"

[requires]

python_version = "3.11"

Development Tools and IDE Setup

Text Editors and IDEs

VS Code Setup

Install extensions for Python and FRC development:

{

"recommendations": [

"ms-python.python",

"ms-python.black-formatter",

]

}

VS Code's Python extension will automatically detect and use the pipenv environment.

VS Code settings for FRC projects (.vscode/settings.json):

{

"python.formatting.provider": "black",

"files.exclude": {

"**/__pycache__": true,

"**/.pytest_cache": true

}

}

Vim/Neovim Setup

For terminal-based development, configure Python language server:

" Using vim-plug

Plug 'neovim/nvim-lspconfig'

Plug 'hrsh7th/nvim-cmp'

Plug 'hrsh7th/cmp-nvim-lsp'

Plug 'psf/black'

" LSP configuration

lua << EOF

require'lspconfig'.pyright.setup{}

EOF

Code Quality Tools

Install code quality tools as development dependencies:

pipenv install --dev black flake8 mypy

Black (Code Formatting)

# Format all Python files

pipenv run black src/

# Check formatting without changes

pipenv run black --check src/

Configuration in pyproject.toml:

[tool.black]

line-length = 88

target-version = ['py311']

include = '\.pyi?

extend-exclude = '''

/(

# Exclude auto-generated files

| build

| dist

)/

'''

Flake8 (Linting)

# Lint all files

pipenv run flake8 src/

# Configuration file: .flake8

[flake8]

max-line-length = 88

extend-ignore = E203, W503

exclude = .git,__pycache__,build,dist

MyPy (Type Checking)

# Type check code

pipenv run mypy src/

# Configuration in mypy.ini

[mypy]

python_version = 3.11

warn_return_any = True

warn_unused_configs = True

disallow_untyped_defs = True

[mypy-robotpy.*]

ignore_missing_imports = True

FRC-Specific Python Setup

RobotPy Installation

# Install RobotPy with all components

pipenv install robotpy[all]

# Download and install RoboRIO packages

pipenv run python -m robotpy_installer download-python

# Install specific components only

pipenv install robotpy robotpy-wpilib robotpy-commands-v2

Project Structure

Recommended directory structure for FRC Python projects:

frc-robot-2024/

├── robot.py # Main robot entry point

├── constants.py # Robot constants

├── Pipfile # Python dependencies

├── Pipfile.lock # Pinned dependency versions

├── pyproject.toml # Project configuration

├── .gitignore # Git ignore patterns

├── src/ # Source code

│ ├── __init__.py

│ ├── subsystems/ # Robot subsystems

│ ├── commands/ # Robot commands

│ └── utils/ # Utility functions

├── tests/ # Unit tests

│ ├── __init__.py

│ └── test_robot.py

└── scripts/ # Deployment and utility scripts

└── deploy.sh

Environment Variables

pipenv automatically manages environment variables within the virtual environment. For project-specific variables, you can create a .env file in your project root.

# .env file

ROBOTPY_TEAM_NUMBER=1757

ROBOTPY_ROBOT_HOST="roborio-1757-frc.local"

pipenv will automatically load this file.

For convenience, you can add aliases to your ~/.bashrc or ~/.zshrc:

# FRC-specific aliases

alias deploy='pipenv run python robot.py deploy'

alias sim='pipenv run python robot.py sim'

alias test-robot='pipenv run pytest tests/'

Testing and Debugging

Unit Testing with pytest

# tests/test_drivetrain.py

import pytest

from src.subsystems.drivetrain import Drivetrain

class TestDrivetrain:

def setup_method(self):

self.drivetrain = Drivetrain()

def test_initialization(self):

assert self.drivetrain is not None

def test_tank_drive(self):

# Test tank drive functionality

self.drivetrain.tank_drive(0.5, -0.5)

# Add assertions for expected behavior

Run tests:

# Run all tests

pipenv run pytest

# Run with coverage

pipenv run pytest --cov=src

# Run specific test file

pipenv run pytest tests/test_drivetrain.py -v

Debugging Techniques

Using Python Debugger (pdb)

import pdb

def autonomous(self):

pdb.set_trace() # Breakpoint

# Your code here

Remote Debugging on RoboRIO

Install debugpy as a dev dependency:

pipenv install --dev debugpy

# For remote debugging (use carefully)

import debugpy

debugpy.listen(5678)

debugpy.wait_for_client() # Optional: wait for debugger

Performance Optimization

Profiling Robot Code

import cProfile

import pstats

def profile_robot_code():

pr = cProfile.Profile()

pr.enable()

# Your robot code here

pr.disable()

stats = pstats.Stats(pr)

stats.sort_stats('cumulative')

stats.print_stats(10) # Top 10 functions

Memory Management

import gc

import psutil

import os

def monitor_memory():

process = psutil.Process(os.getpid())

memory_info = process.memory_info()

print(f"RSS: {memory_info.rss / 1024 / 1024:.2f} MB")

print(f"VMS: {memory_info.vms / 1024 / 1024:.2f} MB")

# Force garbage collection

gc.collect()

Deployment Automation

Deployment Script

Create scripts/deploy.sh:

#!/bin/bash

set -e # Exit on any error

echo "Running tests..."

pipenv run pytest tests/ -v

echo "Checking code quality..."

pipenv run black --check src/

pipenv run flake8 src/

echo "Deploying to robot..."

pipenv run python robot.py deploy

echo "Deployment complete!"

Make executable:

chmod +x scripts/deploy.sh

Git Hooks for Quality

Create .git/hooks/pre-commit:

#!/bin/bash

# Run code formatting

pipenv run black src/ tests/

# Run linting

pipenv run flake8 src/ tests/

# Run type checking

pipenv run mypy src/

# Run tests

pipenv run pytest tests/

if [ $? -ne 0 ]; then

echo "Pre-commit checks failed!"

exit 1

fi

Team Collaboration Best Practices

Environment Consistency

- Document Python version: Use

.python-versionfile or specify inPipfile. - Pin dependencies:

Pipfile.lockhandles this automatically. CommitPipfile.lockto your repository. - Share configurations: Include editor configs in repository.

- Automate setup: Provide setup scripts for new team members.

Setup Script for New Members

Create setup.sh:

#!/bin/bash

echo "Setting up FRC development environment..."

# Check for pipenv

if ! command -v pipenv &> /dev/null

then

echo "pipenv could not be found. Please install it first."

echo "Run: pip install --user pipenv"

exit

fi

# Install dependencies from Pipfile.lock

pipenv install --dev

# Install RobotPy for robot

pipenv run python -m robotpy_installer download-python

echo "Setup complete! Run 'pipenv shell' to activate."

Troubleshooting Common Issues

Import Errors

# Verify virtual environment python

pipenv run which python

# Check dependency graph

pipenv graph

# Reinstall packages

pipenv install --reinstall robotpy

Deployment Issues

# Check robot connectivity

ping roborio-1757-frc.local

# Verify robotpy installation on robot

pipenv run python -m robotpy_installer list

# Manual deployment

pipenv run python -m robotpy_installer install-python

pipenv run python robot.py deploy --builtin

Performance Issues

# Monitor robot resources via SSH

ssh [email protected] "top -n 1"

# Check for memory leaks

ssh [email protected] "cat /proc/meminfo"

This comprehensive Python development setup ensures efficient, consistent, and maintainable FRC robot code development on Linux systems.

System Administration for FRC Linux Systems

System administration skills are essential for maintaining reliable FRC development environments and troubleshooting robot systems. This page covers practical Linux administration focused on FRC scenarios.

User and Permission Management

Understanding Linux users and permissions is crucial for both development machines and RoboRIO management.

User Management Basics

# View current user

whoami

id

# List all users

cat /etc/passwd | cut -d: -f1

# Add user to group (useful for development)

sudo usermod -aG dialout $USER # For serial port access

sudo usermod -aG docker $USER # For Docker access

# Check group membership

groups

groups username

File Permissions

# View permissions

ls -la

# Change permissions

chmod +x script.sh # Make executable

chmod 644 file.txt # Read/write for owner, read for others

chmod 755 directory/ # Standard directory permissions

# Change ownership

sudo chown user:group file.txt

sudo chown -R user:group directory/

# Special permissions for team sharing

chmod g+s shared_directory # Set group ID bit

Sudo Configuration

For team development machines, configure sudo properly:

# Edit sudoers file (ALWAYS use visudo)

sudo visudo

# Allow users in frc group to run robot deployment without password

%frc ALL=(ALL) NOPASSWD: /usr/bin/systemctl restart robot

# Allow specific commands without password

username ALL=(ALL) NOPASSWD: /bin/systemctl restart robot, /bin/journalctl

Network Administration

Network configuration is critical for robot connectivity and development workflows.

Network Interface Configuration

# View network interfaces

ip addr show

ip link show

# Bring interface up/down

sudo ip link set eth0 up

sudo ip link set eth0 down

# Configure static IP (temporary)

sudo ip addr add 10.17.57.100/24 dev eth0

sudo ip route add default via 10.17.57.1

# View routing table

ip route show

NetworkManager (Modern Linux)

# List connections

nmcli connection show

# Connect to WiFi

nmcli device wifi connect "SSID" password "password"

# Create connection profile for robot network

nmcli connection add type ethernet con-name "robot-eth" ifname eth0 \

ip4.addresses 10.17.57.100/24 ip4.gateway 10.17.57.1 \

ip4.dns 8.8.8.8 ip4.method manual

# Activate connection

nmcli connection up "robot-eth"

Persistent Network Configuration

systemd-networkd (RoboRIO-style)

# /etc/systemd/network/10-robot-eth.network

[Match]

Name=eth0

[Network]

Address=10.17.57.100/24

Gateway=10.17.57.1

DNS=8.8.8.8

Traditional /etc/network/interfaces (Debian/Ubuntu)

# /etc/network/interfaces

auto eth0

iface eth0 inet static

address 10.17.57.100

netmask 255.255.255.0

gateway 10.17.57.1

dns-nameservers 8.8.8.8

Service Management with systemd

systemd is the modern init system used on most Linux distributions, including the RoboRIO.

Basic Service Commands

# Service status and control

sudo systemctl status robot

sudo systemctl start robot

sudo systemctl stop robot

sudo systemctl restart robot

sudo systemctl reload robot

# Enable/disable services

sudo systemctl enable robot # Start on boot

sudo systemctl disable robot # Don't start on boot

# List all services

systemctl list-units --type=service

systemctl list-units --failed

Creating Custom Services

Create a service file for custom robot utilities:

# /etc/systemd/system/robot-logger.service

[Unit]

Description=Robot Data Logger

After=network.target

Wants=network.target

[Service]

Type=simple

User=lvuser

Group=lvuser

WorkingDirectory=/home/lvuser

ExecStart=/home/lvuser/logger/robot_logger.py

Restart=always

RestartSec=5

StandardOutput=journal

StandardError=journal

[Install]

WantedBy=multi-user.target

Manage the custom service:

# Reload systemd configuration

sudo systemctl daemon-reload

# Enable and start service

sudo systemctl enable robot-logger

sudo systemctl start robot-logger

# Check logs

journalctl -u robot-logger -f

Process and Resource Management

Monitor and manage system resources effectively.

Process Monitoring

# Real-time process monitoring

top

htop # Enhanced version

# Process tree

pstree

ps aux --forest

# Find processes by name

pgrep python

pkill -f robot.py

# Monitor specific process

top -p $(pgrep -f robot.py)

Resource Monitoring

# System load and uptime

uptime

cat /proc/loadavg

# Memory usage

free -h

cat /proc/meminfo

# Disk usage

df -h

du -h /home/lvuser | sort -h

# I/O statistics (if iostat available)

iostat -x 1 5

Setting Resource Limits

Control resource usage for critical processes:

# Limit CPU usage (nice values)

nice -n 10 python robot.py # Lower priority

renice -n 5 -p <PID> # Change priority

# Memory limits with systemd

# In service file:

[Service]

MemoryLimit=512M

CPUShares=1024

Log Management

Proper log management is essential for debugging and monitoring.

journalctl (systemd logs)

# View all logs

journalctl

# Follow logs in real-time

journalctl -f

# Service-specific logs

journalctl -u robot

journalctl -u robot -f --since "1 hour ago"

# Filter by priority

journalctl -p err # Error messages only

journalctl -p warning # Warning and above

# Boot logs

journalctl -b # Current boot

journalctl -b -1 # Previous boot

Traditional Log Files

# Common log locations

tail -f /var/log/messages # System messages

tail -f /var/log/syslog # System log (Debian/Ubuntu)

tail -f /var/log/dmesg # Kernel messages

# Application logs

tail -f /var/log/robot.log # Custom application logs

Log Rotation and Cleanup

# Configure journald limits

sudo vi /etc/systemd/journald.conf

# SystemMaxUse=100M

# SystemMaxFileSize=10M

# MaxRetentionSec=7d

# Manual cleanup

sudo journalctl --vacuum-time=7d # Keep 7 days

sudo journalctl --vacuum-size=100M # Keep 100MB

# Traditional log rotation (logrotate)

sudo vi /etc/logrotate.d/robot

/var/log/robot.log {

daily

rotate 7

compress

delaycompress

missingok

create 644 lvuser lvuser

}

Package Management

Keep systems updated and manage software packages.

APT (Debian/Ubuntu)

# Update package lists

sudo apt update

# Upgrade packages

sudo apt upgrade

sudo apt full-upgrade

# Install packages

sudo apt install git python3-pip lazygit

# Remove packages

sudo apt remove package-name

sudo apt purge package-name # Remove config files too

# Search packages

apt search robotpy

apt show python3-pip

YUM/DNF (RHEL/Fedora)

# Update system

sudo dnf update

# Install packages

sudo dnf install git python3-pip

# Search and info

dnf search robotpy

dnf info python3-pip

Pacman (Arch Linux)

# Update system

sudo pacman -Syu

# Install packages

sudo pacman -S git python-pip lazygit

# Search packages

pacman -Ss robotpy

Security and Hardening

Basic security practices for FRC development systems.

SSH Security

# Generate strong SSH keys

ssh-keygen -t ed25519 -C "[email protected]"

# Configure SSH client

vi ~/.ssh/config

Host roborio-*

User admin

IdentityFile ~/.ssh/id_ed25519

StrictHostKeyChecking no

UserKnownHostsFile /dev/null

# SSH server hardening (on development machines)

sudo vi /etc/ssh/sshd_config

# Disable password authentication

PasswordAuthentication no

PubkeyAuthentication yes

# Change default port

Port 2222

Firewall Configuration

# UFW (Uncomplicated Firewall) - Ubuntu

sudo ufw enable

sudo ufw default deny incoming

sudo ufw allow ssh

sudo ufw allow from 10.17.57.0/24 # Allow robot network

# iptables (traditional)

sudo iptables -L # List rules

sudo iptables-save # View current rules

File System Security

# Set secure permissions on sensitive files

chmod 600 ~/.ssh/id_rsa

chmod 644 ~/.ssh/id_rsa.pub

chmod 700 ~/.ssh

# Find files with incorrect permissions

find /home/user -type f -perm /o+w # World-writable files

find /home/user -type f -perm /g+w # Group-writable files

Backup and Recovery

Protect important data and configurations.

Configuration Backups

# Backup important configuration files

sudo tar -czf /backup/system-configs-$(date +%Y%m%d).tar.gz \

/etc/ssh/ \

/etc/systemd/system/ \

/etc/network/ \

/home/lvuser/.ssh/

# Robot code backup

tar -czf robot-backup-$(date +%Y%m%d).tar.gz \

robot.py constants.py src/ requirements.txt

Git-based Configuration Management

# Version control system configurations

cd /etc

sudo git init

sudo git add ssh/ systemd/system/ network/

sudo git commit -m "Initial system configuration"

# Track changes

sudo git diff

sudo git add -A

sudo git commit -m "Updated SSH configuration"

Automation and Scripting

Automate common administration tasks.

System Monitoring Script

#!/bin/bash

# monitor-robot.sh

LOG_FILE="/var/log/robot-monitor.log"

log_message() {

echo "[$(date '+%Y-%m-%d %H:%M:%S')] $1" | tee -a "$LOG_FILE"

}

# Check robot connectivity

if ping -c 1 roborio-1757-frc.local &> /dev/null; then

log_message "Robot is reachable"

else

log_message "WARNING: Robot is not reachable"

fi

# Check disk space

DISK_USAGE=$(df /home | tail -1 | awk '{print $5}' | sed 's/%//')

if [ "$DISK_USAGE" -gt 80 ]; then

log_message "WARNING: Disk usage is ${DISK_USAGE}%"

fi

# Check system load

LOAD=$(uptime | awk '{print $NF}')

log_message "System load: $LOAD"

Automated Deployment Script

#!/bin/bash

# deploy-robot.sh

set -e

ROBOT_HOST="roborio-1757-frc.local"

ROBOT_USER="admin"

ROBOT_PATH="/home/lvuser"

echo "Starting deployment to $ROBOT_HOST..."

# Pre-deployment checks

if ! ping -c 1 "$ROBOT_HOST" &> /dev/null; then

echo "ERROR: Cannot reach $ROBOT_HOST"

exit 1

fi

# Deploy code

echo "Copying robot code..."

scp -r src/ robot.py constants.py requirements.txt \

"$ROBOT_USER@$ROBOT_HOST:$ROBOT_PATH/"

# Install dependencies and restart

echo "Installing dependencies and restarting robot service..."

ssh "$ROBOT_USER@$ROBOT_HOST" << 'EOF'

cd /home/lvuser

pip3 install -r requirements.txt

sudo systemctl restart robot

EOF

echo "Deployment complete!"

Troubleshooting Common Issues

Boot Issues

# Check boot logs

journalctl -b

dmesg | less

# Check failed services

systemctl --failed

# Rescue mode (if system won't boot properly)

# Boot with systemd.unit=rescue.target

Network Issues

# Diagnose network connectivity

ip addr show

ip route show

ping 8.8.8.8

nslookup google.com

# Reset network configuration

sudo systemctl restart networking

sudo systemctl restart NetworkManager

Performance Issues

# Check system resources

top

iotop # I/O monitoring

netstat -i # Network interface statistics

# Check for high CPU processes

ps aux | sort -nr -k 3 | head -10

# Check for high memory processes

ps aux | sort -nr -k 4 | head -10

This system administration knowledge provides the foundation for maintaining reliable, secure, and efficient Linux systems in FRC environments. Regular practice with these tools and techniques will make you an effective system administrator for your team's robotics infrastructure.

Python

Python! The programming language of choice for 1757. You can download python at https://python.org however I highly suggest not, instead using a package manager. More instructions can be found in installation section

Below is a REPL you can mess with

# This is an editable document

print("Hello world!")

The next few sections are tutorials if you need to learn python

Absolutely every single section in this python area is editable, please feel free to mess with every example!!!

Welcome to Python!

This brief subsection is intended to teach some of the basics of python assuming you already have some basic understanding of programming as a concept.

This is based off of LearnXinYMinute's article on python

Basic basic super basic console IO

if we want to talk to the world, we can use print in order to put output. By default, nothing will print out with bare words

print("Hello World!")

1+1 # does not get printed

print(1+1) # does print out 2

If we want to take input from the user, we can use the input keyword

print("What is your name?")

name = input()

print(f"Hello, {name}") # you will learn about every part of this later

Comments

# Single line comments start with a number symbol.

""" Multiline strings can be written

using three "s, and are often used

as documentation.

"""

# Comments are pieces of writing that don't get executed

print("I will be printed!")

# print("I'm commented out! I won't be printed!")

Values and Expressions

Here is some basic Python terminology regarding data in the language.

Values

In Python, we represent data using values. These are encodings that Python recgonizes as representing some type of data. Here are some examples of different values in python:

5, 3.7, 'a', 'Hello World!, True, [1,2,3,4]

Expressions

We can chain values together with operators (functions that can be performed on values) to create expressions. Here are a few examples:

1 + 1 # evaluates to 2

2 - 3 + 7 + (8 // 2) # evaluates to 10

4 ** 3 # evaluates to 256

True or False # evaluates to True

True and False # evaluates to False

not True # evaluates to False

'Hello' + ' ' + 'World!' # evaluates to 'Hello World!'

'Hello' # evaluates to 'Hello'

When given an expression, Python will evaluate it (i.e. perform operatorations according to their order/precdence) into a value. An expression that evaluates to itself is what is known as a literal.

5 # evaluates to 5. This expression *literally* means exactly what is written: 5

'robot' # evaluates to 'robot'. Again, this expression is literally what it means

2 + 2 # evaluates to 4. This is NOT a literal as it isn't *literally* what is written.

Variables

We have already seen in the previous sections that we can print out values and expressions of values that are evaluated by Python. However, it is often necceasry to remember a given value beyond the scope of a singular print statement or line of code. This is what motivates the need for a variable, which stores elements in computer memory.

You can think of a variable as a "storage box" that has some kind of "datatype" assigned to them. Each of these boxes has a value associated with it.

Here is how you assign a variable, or fill that "box." This is known as an assignment statement, or an initialization statement if this is the first time you are introducing a variable.

a = 1

lets break it down character by charater:

a: the variable name=: this keyword is how we assign to variables. Most notably, is that it is explicitly assignment. You will learn about==in the next section, conditionals, but it always sets the left side equal to the right side, never the other way1the value of which we are assigning the variableato. For now, we will assgin a to be an integer, or typeint(a nonfractional number). We will go over the different datatypes that can be taken on in the next section.

note that python doesn't care about the spacing for assignment statements, so all examples below yield equivelent results

a=1

a =1

a= 1

a = 1

a = 1

a= 1

Unlike other languages, python will dynamically infer the datatype (i.e. you don't have to predeclare a type like in Java or C/C++) for a given variable. Ever since python 3.6 you can also explicitly mark the type, but at runtime it will ignore these markers. For programming best practices it is recommended that you assign types in the following manner:

a: int = 1

this will tell your IDE (a place where you edit code, such as VS Code) that it should expect the type of a to be an int or integer. Despite explicitly being told, Python will not explicitly throw any error if you set a variable to a different type at runtime

a: int = 1 # set the variable a to be an int with value 1

print(a) # => 1

a = "hi" # change the value and of `a` at runtime

print(a) # => hi

It is good code practice to have types be static as it helps significantly with the debugging process

As mentioned before, the equals sign is explicitly assignment

a = 2 # create variable a with value 2

a = a + 1 # lets set a to 1 + itself

print(a) # => 3

for most basic arithmetic operations +, -, * and /, we can do the following substitution:

a = 5

a = a + 2

a = a - 2

a = a * 5

a = a / 2.5

is the same as

a = 5

a += 2

a -= 2

a *= 5

a /= 2.5

it even works for the modulo (%) operation!

a = 49

b = 49

print(a,b) # => 49 49

a %= 5

b %= 5

print(a,b) # => 4 4

We can assign multiple variables at once

a, b = 1, 2 # multi assignment

print(a) # => 1

print(b) # => 2

# now lets swap the variables!

a, b = b, a

print(a) # => 2

print(b) # => 1

Datatypes

Python has a couple of primitive datatypes, or general structures, that you can use. A datatype or type is a set of values AND the operations that can be called upon them. The function of operators (i.e. +, =, -) may change depending on the type or may not be defined.

There are two categories of types:

- "Immutable" types: Types whose internal data cannot be changed. Includes int, bool, floats, strings, and tuples.

- "Mutable" types: Type whose internal data can be changed. Inclues lists, dictionaries, and sets.

The built-in type() function returns the type of a given input.

w = True

x = 5

y = 5.0

z = [5]

print(type(w)) # => <class 'bool'>

print(type(x)) # => <class 'int'>

print(type(y)) # => <class 'float'>

print(type(z)) # => <class 'list'>

Integer

integers are any non-decimal number essentially. Examples include

3 # is the number 3

We can do math on integers

# Math is what you would expect

print(1 + 1) # => 2

print(8 - 1) # => 7

print(10 * 2) # => 20

print(35 / 5) # => 7.0

note that last answer, 7.0, not as 7, thats our next datatype, Float or floating point number.

If you want to do specifically integer division, that is done with //

# Floor division rounds towards negative infinity

print(5 // 3) # => 1

print(-5 // 3) # => -2

print(5.0 // 3.0) # => 1.0 # works on floats too

print(-5.0 // 3.0) # => -2.0

Float

Floating point numbers, or floats, in python is anything that has a decimal. Most of the time, types will "upgrade" to float if doing anything where that data is important

print(1.1 + 2.2) # => 3.3

print(10.0 / 3) # => 3.33333333333

print(10 / 3.0) # => 3.33333333333 note: float division will always return a float

print(10 / 3) # => 3.33333333333 regardless of what types were used

print(6.6 * 5.4) # => 35.64

Advanced Math

# Modulo operation, aka remainder

print(7 % 3) # => 1

# i % j have the same sign as j, unlike C

print(-7 % 3) # => 2

# Exponentiation (x**y, x to the yth power)

print(2**3) # => 8

# Enforce precedence with parentheses

print(1 + 3 * 2) # => 7

print((1 + 3) * 2) # => 8

Boolean

Its literally, True or False, note the capitalization in python has a capital first letter, unlike other languages

Booleans are really important in cs, once we get to conditionals this becomes more apparent

# negate with not

print(not True) # => False

print(not False) # => True

Woah if a boolean is not one thing, its the other thing!!!

# Boolean Operators

# Note "and" and "or" are case-sensitive

print(True and False) # => False

print(False or True) # => True

Coming from other languages, || is or and && is and, it reads more like english

# True and False are actually 1 and 0 but with different keywords, you can do really cursed things with this info

print(True + True) # => 2

print(True * 8) # => 8

print(False - 5) # => -5

We'll get into comparison operations in a second, but abusing the True is 1 and False is 0 principle we can do some more funny things

# Comparison operators look at the numerical value of True and False

print(0 == False) # => True

print(2 > True) # => True

print(2 == True) # => False

print(-5 != False) # => True

Strings

Strings are a sequence of alphanumeric and ascii characters.

# Strings are created with " or '

print("This is a string.")

print('This is also a string.')

# Strings can be added too

print("Hello " + "world!") # => "Hello world!"

# String literals (but not variables) can be concatenated without using '+'

print("Hello " "world!") # => "Hello world!"

We can find the length of a string with the len keyword

print(len("This is a string")) # => 16

Once we talk more about variables the following becomes really useful

# Since Python 3.6, you can use f-strings or formatted string literals.

name = "Reiko"

print(f"She said her name is {name}.") # => "She said her name is Reiko"

# Any valid Python expression inside these braces is returned to the string.

name = "Reiko"

print(f"{name} is {len(name)} characters long.") # => "Reiko is 5 characters long."

if we want to include the literal character " in a string, we can use the escape character \ like the following

print("these are \"quotes\" and are cool!")

here is a list of different characters that would need to be escaped, try them out

\'single quote, if you are using'to quote\\the backslash character\nnew line\rcarriage return\ttab\bbackspace\fform feed

print("edit me and try some of the escape characters!")

Methods of strings

We can call a couple functions on things of string type

# str.index(n) returns the index of the first found substring `n` in a string,

# raises a ValueError if index does not exist. (We will talk more exceptions in a later section).

str = 'robor'

print(str.index('ro')) # => 0

print(str[0]) # => r

print(str.index('bor')) # => 2

print(str[2]) # => b

# str.isalnum() returns 'True' if characters in the string are alphanumeric

# (i.e. is a number of letter) and there is at least one character.

str1 = 'robor123'

str2 = '""'

str3 = '' # strings can have no characters! (this is an empty string)

print(str1.isalnum()) # => True

print(str2.isalnum()) # => False

print(str3.isalnum()) # => False

# str.upper() returns returns a copy of the string with all the cased characters converted to uppercase.

str1 = 'ww1757'

print(str1.upper())) # => 'WW1757'

print(str1) # => 'ww1757'

# note the original string is unaffected as str.upper() returns a copy!

# str.lower() returns returns a copy of the string with all the cased characters converted to lowercase.

str1 = 'WestWood1757'

print(str1.lower())) # => 'westwood1757'

print(str1) # => 'WestWood1757'

# note the original string is unaffected as str.lower() returns a copy!

Python has powerful string manipulation methods already built into the language. You should take advantadge of these where possible. For an exhaustive list, check out the official Python docs.

After this, try out Challenge 1!

Lists

Lists are just collections of other datatypes. They most literally list things out.

a = [1,2,3,4] # creating a list of 4 elements

print(a) # => [1,2,3,4]

just like with strings, we can get the length of lists

a = [1,2,3,4]

print(len(a)) # => 4

lists aren't limited to any given type of object, nor do they need to contain only one type of object (but most times they really should!)

a_list = ["Lettuce", "Tomatoes", "Pickles", 5]

print(a_list)

to get any given value of a list, we index that list

In python, list indicies are 0 starting. This means to access the first element in a list, we use list_name[0]

coolList = ["First", "Second", "Third", "Fourth"]

print(coolList[0]) # => "First"

print(coolList[2]) # => "Third"

print(coolList[1]) # => "Second"

this may seem confusing at first, but has some neat properties that make our lives in most cases easier.

slices

We can also take slices of lists, or sublists within the same list. Taking a slice creates a new list.

The syntax for taking a slice is list[first:last] where it takes inclusively the first index, but up to the last index.

coolList = ["First", "Second", "Third", "Fourth"]

print(coolList[1:3]) # => ["Second", "Third"]

print(coolList[0:2]) # => ["First", "Second"]

print(coolList[0:1]) # => ["First"]

# remember! Its up to but not including the last!

we can also take splices from a given index onwards, or from a given index up until, by not specifying the first or last index

a = [1,2,3,4,5,6,7,8,9,10]

print(a[1:]) # [2,3,4,5,6,7,8,9,10]

print(a[:7]) # [1,2,3,4,5,6]

print(a[:]) # [1,2,3,4,5,6,7,8,9,10]

#this is valid! Its actually the fastest way to copy the elements

print(a[10:]) # []

#calling out of bound indices will return an empty list!

by using a negative index, we can actually take the last elements from lists

a = [1,2,3,4,5]

print(a[-1]) # => 5

print(a[0:-1]) # => [1,2,3,4]

# using negative, we can take some fun parts of lists

print(a[-2:2]) # [] there are no elements between 4 and 2, in that order

strings as lists

Strings are actually just lists of characters!

stringy = "Stringy McStringFace"

print(stringy[0:7]) # => Stringy

Hence why we can run operations like len on them

Changing step

Complete indexing is actually done with li[start:end:step] We can step and iterate through the list

a = [1,2,3,4,5,6,7,8]

print(a[::2]) # => [1,3,5,7]

print(a[1::2]) # => [2,4,6,8]

print(a[1:5:2]) # => [2,4]

# we can reverse a list with a negative iteration

print(a[::-1]) # => [8,7,6,5,4,3,2,1]

Methods of lists

We can call a couple of things on list objects

# li.index(n) returns the index of the first found element in a list

li = [0,2,4,6,8]

print(li.index(0)) # => 0

print(li[0]) # => 0

print(li.index[2]) # => 1

print(li[1]) # => 2

We can also add things onto a list with the append keyword

li = [0,2,4,6,8]

print(li) # => [0,2,4,6,8]

li.append(10) # Adds on the element "10" to the end

print(li) # => [0,2,4,6,8,10]

If we want to put elements in a specific location, we can use the insert keyword, its usage is li.insert(index, value)

we can remove elements with the del keyword

We can also remove the first instance of an element with the remove method, usage of li.remove(index)

li = [1,3,4]

print(li) # => [1,3,4]

li.insert(1,2) # inserts that element

print(li) # => [1,2,3,4]

del li[3]

print(li) # => [1,2,3]

li.remove(2)

print(li) # => [1,3]

We can add lists onto other lists.

li = [1,2,3]

otherLi = [4,5,6]

print(li) # => [1,2,3]

print(otherLi) # => [4,5,6]

print(li + otherLi) # => [1,2,3,4,5,6]

# note that it doesn't modify either of the lists (because a new list is created!)

print(li) # => [1,2,3]

print(otherLi) # => [4,5,6]

if we do want to modify the lists, we can use the extend method to take the elements of one list and put it into the other

li = [1,2,3]

li2 = [4,5,6]

print(li) # => [1,2,3]

print(li2) # => [4,5,6]

li.extend(li2)

print(li) # => [1,2,3,4,5,6]

as we've explored earlier, the len function can get us the length of a list

a = ["tomato", "potato", "o"]

print(len(a)) # => 3

Python has powerful list manipulation methods that are built into the language. To find an exhaustive list of python list methods, check out the official Python Docs.

Element checking

We can use the in keyword to figure out if a given element is in a list

a = [1,2,3,4]

print(1 in a) # => True the value of `1` is in the list `a`

print(5 in a) # => False

print(0 in a) # => False

List =

So far we've seen that the assignment statement = copies the contents of one variable into the other. This is true for ints, strings, bools, and floats.

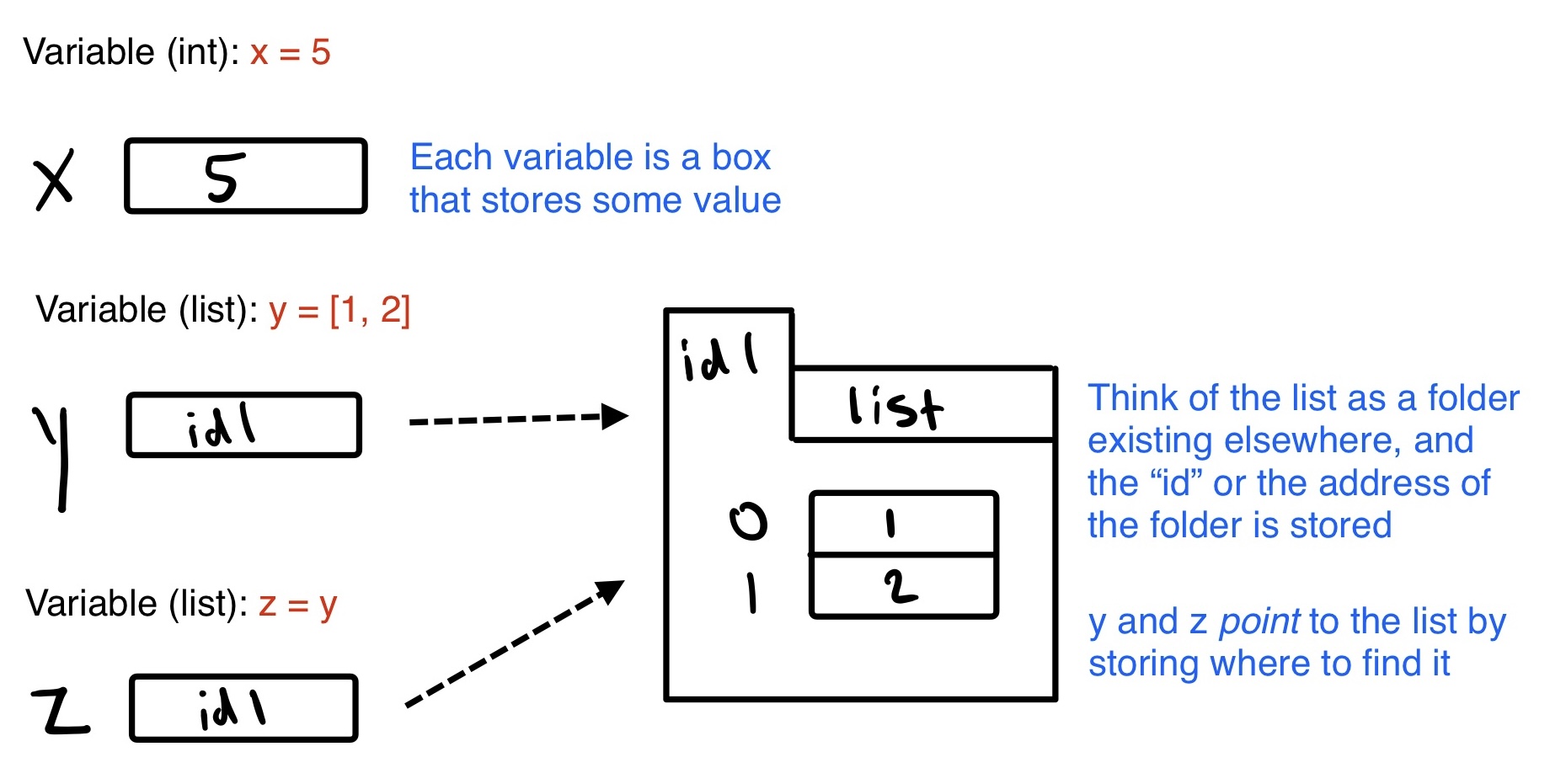

However, variables for lists store an address of where we can find the actual list in computer memory. We say the variable "points" to the list as opposed to "storing" the list. The = statement copies the address as opposed to copying the list's contents. See the following diagram:

The following example illustrates the consequences of this, being that modifying a variable that points to a list means we see the modification from any variable that points to it.

a = [1,2,3,4] # a POINTS to this list, which is stored in memory

b = [5,6,7,8] # b POINTS to this list, which is stored in memory

print(a) # => [1,2,3,4]

print(b) # => [5,6,7,8]

a = b # a now POINTS to what b POINTS to

print(a) # => [5,6,7,8]

print(b) # => [5,6,7,8]

b.append(9) # modifying b

print(a) # => [5,6,7,8,9]

print(b) # => [5,6,7,8,9]

b.append(10) # modifying a again

print(a) # => [5,6,7,8,9,10]

print(b) # => [5,6,7,8,9,10]

# As both variables point to the same list in memory,

# modifying one modifies both

If we wanted to copy values, but maintain the independance of the lists so that modifying one does not modify the other, we would need to manually iterate through each list. This can be done cleanly with loops, which we will discuss in the loops section. Note that == checks if two lists have the same values and length, whereas a new keyword is checks if two variables point to the same list. This is dicsussed more in conditionals below.

At this point, try out challenge 2!

Conditionals

Comparison

Lets first begin by being able to check objects, there are a couple of ways to do this

#Equality is ==

print(1 == 1) # => True

print(2 == 1) # => False

# Inequality is !=

print(1 != 1) # => False

print(2 != 1) # => True

# More comparisons

print(1 < 10) # => True

print(1 > 10) # => False

print(2 <= 2) # => True

print(2 >= 2) # => True

# Seeing whether a value is in a range

print(1 < 2 and 2 < 3) # => True

print(2 < 3 and 3 < 2) # => False

# Chaining makes this look nicer

print(1 < 2 < 3) # => True

print(2 < 3 < 2) # => False

We can run this on variables as well

a = 5

print(a == 5) # => True

print(a < 3) # => False

print(2 < a) # => True

since everything on both the right and left side are treated as independent expressions,

(is vs. ==) is checks if two variables refer to the same object, but == checks

if the objects pointed to have the same values.

a = [1, 2, 3, 4] # Point a at a new list, [1, 2, 3, 4]

b = a # Point b at what a is pointing to

print(b is a) # => True, a and b refer to the same object

print(b == a) # => True, a's and b's objects are equal

b = [1, 2, 3, 4] # Point b at a new list, [1, 2, 3, 4]

print(b is a) # => False, a and b do not refer to the same object

print(b == a) # => True, a's and b's objects are equal

the "If" statement

We can have code that changes behavior based on conditions being met, to put it simply, whether there is a True or a False value present

Try out the following example

# Let's just make a variable

some_var = 5

# Here is an if statement. Indentation is significant in Python!

# Convention is to use four spaces, not tabs.

# This prints "some_var is smaller than 10"

if some_var > 10:

print("some_var is totally bigger than 10.")

elif some_var < 10: # This elif clause is optional.

print("some_var is smaller than 10.")

else: # This is optional too.

print("some_var is indeed 10.")

Inside this there are three distinct blocks, an if block, an elif and an else

You can read the above code with the following in english:

if the value "some var" is greater than ten, then print "..."

otherwise, if the value of "some var" is less than 10, then print "..."

otherwise, print "...."

heres another example that python executes code from top down in ifs

some_var = 5

if some_var < 10:

print("I am less than 10")

elif some_var < 20: # despite this statement being true, the below does not run

print("I am also less than 20")

else:

print("I shouldn't run")

print("I am outside of the if statement, I will print")

the elif and else statements are completely optional in a conditional, the following are examples showing that you can have an infinite number of elifs, but a maximum of 1 else

# example using a singular `if` and nothing more

print("Give a number")

user_number = int(input())

# we use int() to turn the string result of input into a number

if user_number < 10:

print("I am less than 10!")

print("I will always print!")

# example using multiple `elif`s but no `else`

print("Give a number")

user_number = int(input())

if user_number < 10:

print("I am less than 10!")

elif user_number < 20:

print("I am less than 20, but more than 10!")

elif user_number < 30:

print("in between 20 and 30!")

print("I will always print!")

# example showing that the if takes only a boolean

if True:

print("I will always print!")

else:

print("I will never print")

# example showing a False will never run

if False:

print("I will never print!")

else:

print("I will always print")

At this point try out challenge 3!

Loops

For loop

There are instances in which we do not want to have long messy unreadable code that can result from wanting to repeat an action.

# this is bad!

print("hi")

print("hi")

print("hi")

print("hi")

print("hi")

print("hi")

so instead, we have ways of repeating code over and over again, we can use a loop.

for i in range(6):

print("hi")

typically we want something to change a little bit in each part of the loop. the i in this for loop is specifying the name of a variable, so we can use it in the loop

for i in range(5):

print(i) # should print 0, 1, 2, 3, 4 all on seperate lines

just like in conditional statements, loops are indent sensitive, the following should produce an error

for i in range(6):

print("hi") # indentation error!!!

Lets break down each part of the for loop

forthe keyword telling python we want to create a loop that iterates a fixed number of timesiwe are defining a variable with nameito run through this loopinjust another part of the syntax in aforlooprange(6)creates an iterator that goes from 0 through 5 (6 is exclusive). We will talk about the difference between an iterator and a list in a future chapter.- For the purposes of a loop, all lists are iterables, but not all iterables are lists. You can't call

range(6)[0]like you can[1,2,3,4][0]If you wish to treat any iterable as a list, just wrap it in alistfunction like so:

- For the purposes of a loop, all lists are iterables, but not all iterables are lists. You can't call

a = range(6)

print(a) # => range(0,6)

print(list(a)) # => [0,1,2,3,4,5]

since lists are iterables, we can also do the following:

a = ["sheep", "dog", "cat", "mouse"]

for i in a:

print(f"{i} is an animal")

note that while convention says that loop variables should be i, j, k, l, there is no reason that they can't be anything else

for cool_var in range(7):

print(f"This works! {cool_var}")

sometimes, we may need to keep track of the index of some iterable. We can take advantage of len() from before to help us solve these problems:

a = ["sheep", "dog", "cat", "mouse"]

# what if we wanted only the even indices?

for i in range(len(a)):

if i % 2 == 0: # check if even

print(a[i])

While Loops

In control flow, we may want to have something run continuously until a certain condition is met. The basic syntax of a while loop is the following:

while condition:

action

Just like with for loops and if statements, indentation matters for python to correctly parse it

Here is an example while loop

user = ""

while user != "q":

print("I will keep printing until you enter 'q'")

user = input()

When possible, you should use for loops as opposed to while loops as Python is more optimized for the former. In cases however in which you need to check a certain condition (above) or are modifying the list/string/iterable that you are attempting to iterate through, you must use a while loop.

Breaking loops

Some times we want to break out of a loop conditionally, we can use the break keyword to exit a loop halfway

for i in range(5):

print(i)

if i == 2:

break # prevents us from printing 3 or 4

# this code should print 0, 1, 2 on seperate lines

rewriting the example from the while loop section to instead use a break

while True: # while true will loop forever!!

print("I will keep printing until you enter 'q'")

if input() == "q":

break

heres perhaps a more practical example

password = "super secure"

for i in reversed(range(3)):

if password == input():

print("Correct!")

break

else:

print("incorrect!")

print(f"You have {i} tries left for your password")

in this instance, since break is called, the else statement is actually optional, because when python hits break it will immediately cease the loop and not let it clean up

using this knowledge we can rewrite it as the following

password = "super secure"

for i in reversed(range(3)):

if password == input():

print("Correct!")

break

print("incorrect!") # note no else statement needed

print(f"You have {i} tries left for your password")

continue

we can also use the continue statement in order to skip execution of anything after the statement if reached

for i in range(5):

print(f"I will be reached: {i}")

continue # will stop execution of everything after, but continue the loop

print("I will never be reached")

for i in range(20):

print(i)

if i % 2 == 0: # simple check if the number is even

print("I'm even")

continue

print("I'm an odd number!")

After this, try out Challenge 4

Advanced Datatypes

The following are more advanced types of data that have more specific characteristics.

Tuple

List a list, but immutable This means that once a tuple is created, you cannot change the values of tuples.

tup = (1,2,3) # tuples are made with () instead of []

print(tup[0]) # => 1

tup = (1,2,3)

tup[0] = 3 # Raises a TypeError

Tuples of length one must be made with a comma

print(type((1)) ) # => <class 'int'>

print(type((1,)) ) # => <class 'tuple'>

print(type(()) ) # => <class 'tuple'>

You can do most of the list operations on tuples as well

tup = (1,2,3)

print(len(tup)) # => 3

print(tup + (4, 5, 6)) # => (1, 2, 3, 4, 5, 6)

print(tup[:2]) # => (1, 2)

print(2 in tup) # => True

# You can unpack tuples (or lists) into variables

a, b, c = (1, 2, 3) # a is now 1, b is now 2 and c is now 3

# You can also do extended unpacking

a, *b, c = (1, 2, 3, 4) # a is now 1, b is now [2, 3] and c is now 4

# Tuples are created by default if you leave out the parentheses

d, e, f = 4, 5, 6 # tuple 4, 5, 6 is unpacked into variables d, e and f

# respectively such that d = 4, e = 5 and f = 6

# Now look how easy it is to swap two values

e, d = d, e # d is now 5 and e is now 4

So why would you want to use a tuple?

To put it shortly, tuples are really good when you are using static typing, as they have specified length and types of each parameter that doesn't change, which means that

Dictionaries

TODO: CLEANUP

# Dictionaries store mappings from keys to values

empty_dict = {}

# Here is a prefilled dictionary

filled_dict = {"one": 1, "two": 2, "three": 3}

print(filled_dict)

Note: keys for dictionaries have to be immutable types. This is to ensure that the key can be converted to a constant hash value for quick look-ups.

Immutable types include

ints,floats,strings,tuples.

invalid_dict = {[1,2,3]: "123"} # => Yield a TypeError: unhashable type: 'list'

valid_dict = {(1,2,3):[1,2,3]} # Values can be of any type, however.

filled_dict = {"one": 1, "two": 2, "three": 3}

# Look up values with []

print(filled_dict["one"]) # => 1

Get all keys as an iterable with keys(). We need to wrap the call in list()

to turn it into a list. We'll talk about those later.

Note: for Python versions <3.7, dictionary key ordering is not guaranteed. Your results might not match the example below exactly. However, as of Python 3.7, dictionary items maintain the order at which they are inserted into the dictionary.

filled_dict = {"one": 1, "two": 2, "three": 3}

print(list(filled_dict.keys())) # => ["three", "two", "one"] in Python <3.7

# => ["one", "two", "three"] in Python 3.7+

Get all values as an iterable with values(). Once again we need to wrap it

in list() to get it out of the iterable.

Note: Same as above regarding key ordering.

filled_dict = {"one": 1, "two": 2, "three": 3}

print(list(filled_dict.values())) # => [3, 2, 1] in Python <3.7

# => [1, 2, 3] in Python 3.7+

Check for existence of keys in a dictionary with in

filled_dict = {"one": 1, "two": 2, "three": 3}

print("one" in filled_dict) # => True

print(1 in filled_dict) # => False

Looking up a non-existing key is a KeyError

filled_dict = {"one": 1, "two": 2, "three": 3}

print(filled_dict["four"]) # KeyError

Use get() method to avoid the KeyError

filled_dict = {"one": 1, "two": 2, "three": 3}

print(filled_dict.get("one")) # => 1

print(filled_dict.get("four")) # => None

# The get method supports a default argument when the value is missing

print(filled_dict.get("one", 4)) # => 1

print(filled_dict.get("four", 4)) # => 4

setdefault() inserts into a dictionary only if the given key isn't present

filled_dict = {"one": 1, "two": 2, "three": 3}

filled_dict.setdefault("five", 5) # filled_dict["five"] is set to 5

print(filled_dict["five"])

filled_dict.setdefault("five", 6) # filled_dict["five"] is still 5

print(filled_dict["five"])

Adding to a dictionary

filled_dict = {"one": 1, "two": 2, "three": 3, "five": 5}

print(filled_dict)

filled_dict.update({"four":4}) # => {"one": 1, "two": 2, "three": 3, "four": 4}

print(filled_dict)